AI art is everywhere these days. From social media posts to marketing campaigns, everyone's jumping on the AI-generated art train. But here's the thing – most people are doing it wrong. And when it comes to cultural representation, the stakes get even higher.

Whether you're a designer, marketer, or just someone experimenting with AI tools, you're probably making some critical mistakes that could be hurting your work (and potentially offending entire communities). Let's dive into the seven biggest pitfalls and how to fix them.

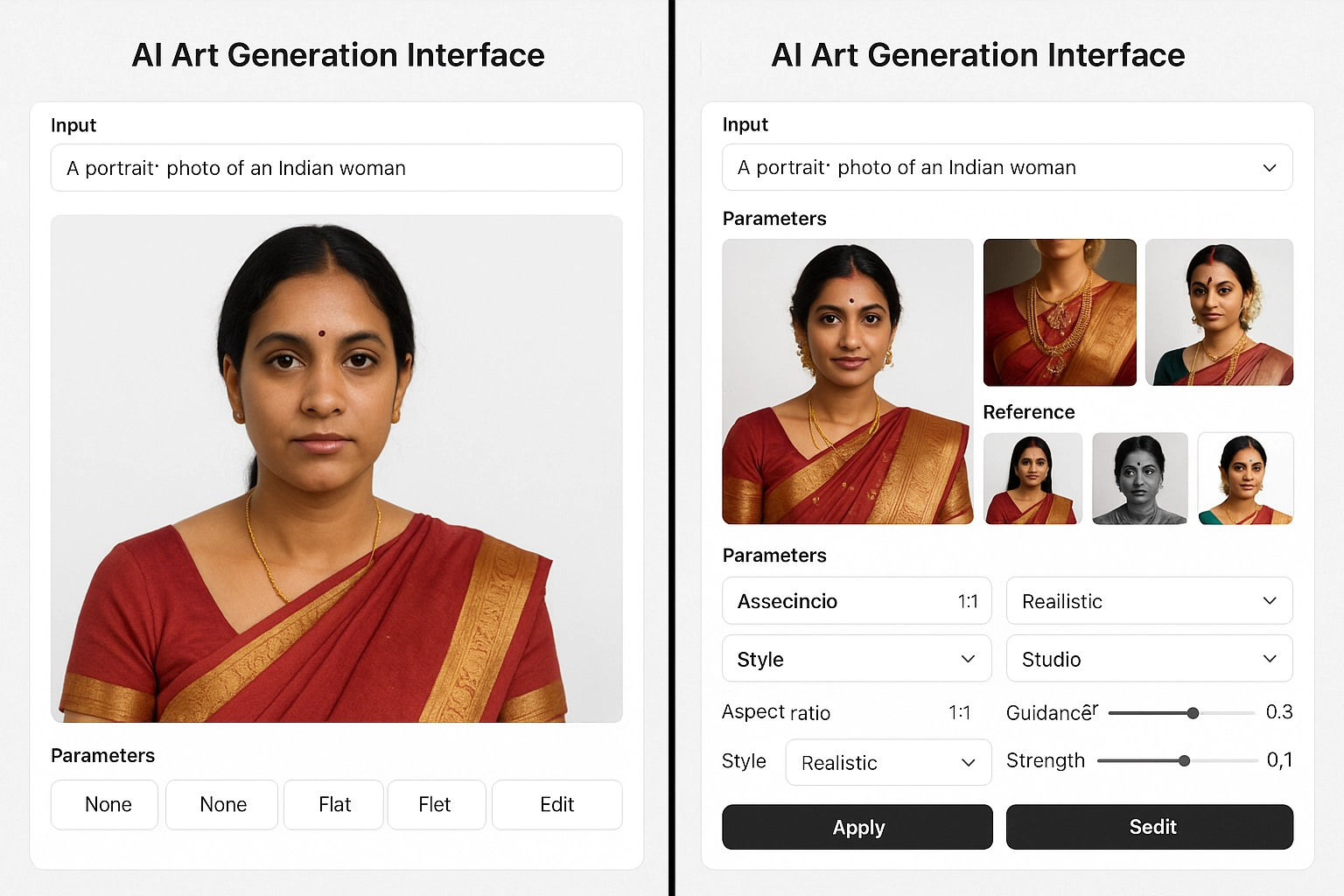

Mistake #1: Relying Too Much on Default Settings

Most people fire up their AI art tool, type in a prompt, and call it a day. Big mistake. Pre-trained models are like using the same Instagram filter as everyone else – you're going to get generic, homogenized results.

This becomes especially problematic when dealing with cultural themes. Default models often perpetuate the most common (and often stereotypical) representations they've seen in their training data.

How to fix it: Spend time customizing your models. If you're using tools like Midjourney or Stable Diffusion, experiment with different style parameters, reference images, and negative prompts. When working with cultural elements, research authentic visual references first, then guide your AI toward those specific details rather than letting it default to whatever it "thinks" a culture looks like.

Mistake #2: Ignoring Your Training Data's Blind Spots

Here's a reality check: AI models are only as good as the data they're trained on. And unfortunately, most training datasets have massive gaps when it comes to cultural representation. They're often heavily skewed toward Western, white perspectives, which means your AI is basically starting with built-in biases.

When you prompt for "beautiful people" or "traditional clothing," the AI will default to whatever it saw most often during training. Spoiler alert: it probably wasn't a diverse representation of global cultures.

How to fix it: Be extremely specific in your prompts. Instead of "traditional dress," specify "traditional Nigerian Igbo attire" or "authentic Hmong ceremonial clothing." Don't assume the AI knows what you mean – spell it out. And always fact-check cultural elements afterward to ensure accuracy.

Mistake #3: Treating Details Like They Don't Matter

AI absolutely butchers the details, especially when it comes to cultural elements. Jewelry, traditional patterns, religious symbols, architectural details – these all get mangled because the AI is essentially playing visual telephone with billions of images.

The problem? These details matter. A lot. Getting them wrong isn't just aesthetically poor – it can be deeply disrespectful to the cultures you're trying to represent.

How to fix it: Plan for post-processing. Generate your base image with AI, then manually refine the cultural details using traditional design tools. Partner with cultural consultants when working on projects involving specific traditions. Your AI can give you a starting point, but human knowledge and care need to finish the job.

Mistake #4: Accepting Gibberish Text and Symbols

AI image generators have a notorious problem with text and symbols. They'll generate something that looks like writing from a distance, but up close, it's complete nonsense. This becomes a serious issue when dealing with cultural scripts, religious symbols, or traditional text elements.

Imagine creating art that's supposed to honor a particular culture, but the "traditional symbols" are actually meaningless squiggles. That's not just bad design – it's potentially offensive.

How to fix it: Never trust AI-generated text or symbols. Always add authentic text and symbols manually after generation. If you're not familiar with the script or symbols you want to include, research them thoroughly or work with someone from that cultural background. Accuracy isn't just about looking good – it's about showing respect.

Mistake #5: Ignoring Physical Laws (And Cultural Logic)

AI creates some wild stuff. Chairs with legs that don't touch the ground, buildings that would collapse immediately, jewelry that defies physics. It's amusing until you're trying to depict cultural artifacts, traditional architecture, or ceremonial objects that have specific structural requirements and deep meaning.

When AI gets the physics wrong on culturally significant items, it's not just a technical error – it's a failure to understand and represent the cultural knowledge embedded in those objects.

How to fix it: Research the function and construction of cultural elements you want to include. Understand how traditional clothing is actually worn, how ceremonial objects are used, how architectural elements work together. Use this knowledge to guide your prompts and catch errors in your results.

Mistake #6: Not Challenging Stereotypical Outputs

This is the big one. AI models often default to stereotypical representations because those are the most common images they've encountered. When you ask for images representing different cultures, you might get results that perpetuate harmful stereotypes or overly simplistic portrayals.

The problem compounds when these AI-generated images get shared widely, reinforcing these narrow representations in people's minds and potentially in future AI training data.

How to fix it: Question your results. Do the people in your images represent authentic diversity within that culture? Are you showing the full richness of traditions, or just the most "exotic" elements that might appeal to outsiders? Push back against one-dimensional representations. Include modern expressions of cultural identity, not just historical or "traditional" ones.

Mistake #7: Thinking AI Art Is Culturally Neutral

Here's the biggest misconception: that AI art creation is just a technical process without cultural implications. Every image you generate intersects with questions of representation, appropriation, and respect for traditional artistic practices.

When you create AI art that draws from cultural traditions, you're making choices about how those cultures are represented in digital spaces. That's not neutral – it's deeply cultural work that requires intention and responsibility.

How to fix it: Approach AI art creation with cultural humility. Ask yourself: Am I the right person to be representing this culture? Have I done my research? Am I contributing to authentic representation or perpetuating stereotypes? Consider collaborating with people from the cultures you want to represent, rather than going it alone.

The Path Forward

AI art isn't inherently problematic – but how we use it can be. The technology gives us incredible creative power, but with that power comes responsibility. When we're dealing with cultural representation, we need to bring the same care and research we'd bring to any other form of cultural work.

The goal isn't to avoid cultural elements entirely. It's to approach them with the respect, understanding, and collaboration they deserve. This means doing your homework, questioning your results, and always prioritizing authentic representation over aesthetic convenience.

Remember, every image you create and share shapes how cultures are perceived in our increasingly visual world. Make sure you're contributing something positive to that conversation.

Start small: pick one cultural element you've been representing in your work and dive deep into researching its authentic context and meaning. Then apply that same level of care and attention to everything else you create. Your art – and the communities you represent – will be better for it.

The future of AI art isn't just about better algorithms or faster processing. It's about more thoughtful, culturally aware creators who understand that good art respects both aesthetic and cultural integrity. That's a future worth creating.